Spring/Summer 1999

Volume 6, Issue 2

Spring/Summer 1998

Volume

3, Issue 1

January 1995

Volume

2, Issue 4

October 1994

Volume

2, Issue 1

January 1994

Scalable I/O Initiative Progress and Highlights

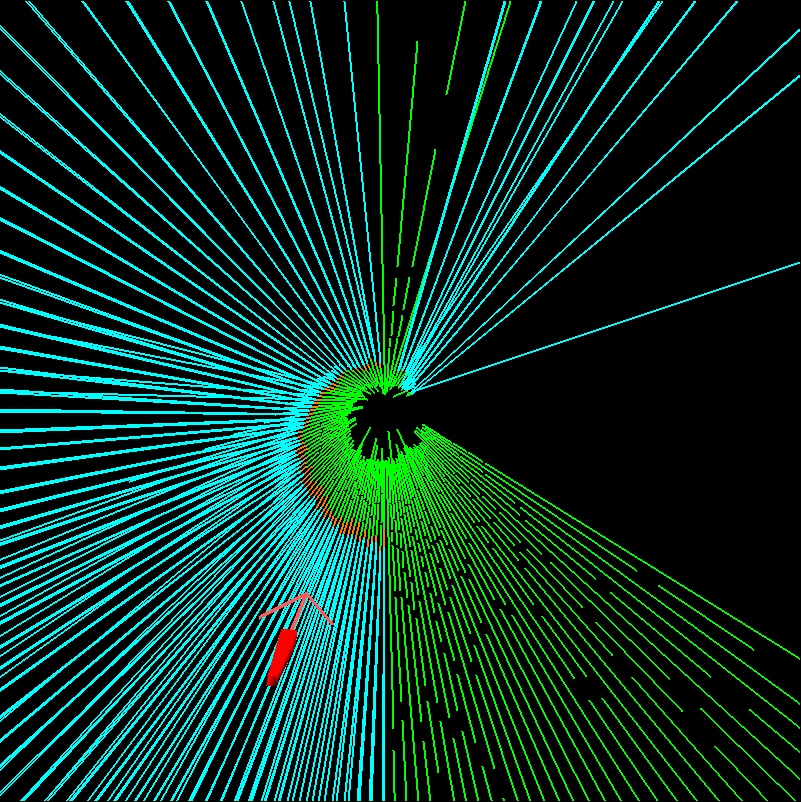

This picture illustrates data taken with the PABLO performance evaluation system during the execution of a Hartree-Fock calculation. |

The Scalable I/O Initiative, a collaboration of agencies, vendors, and institutions that includes CRPC researchers across the country, was formed in 1994 to overcome the biggest obstacle to effective use of teraflops-scale computer systems by scientists and engineers--getting data into, out of, and around such systems fast enough to avoid severe bottlenecks. The initiative is determining applications requirements and using them to guide the development of system support services, file storage facilities, high-performance networking software, programming language features, and compiler techniques.

Using the PABLO performance evaluation system, application developers and computer scientists are cooperating to determine the I/O characteristics of a comprehensive set of I/O-intensive applications. These characteristics are guiding the development of parallel I/O features for I/O-related system software components, including compilers, runtime libraries, parallel file systems, high-performance network interfaces, and operating system services. Proposed new features have been evaluated by measuring the I/O performance of full-scale applications using prototype implementations of these features on full-scale massively parallel computer systems.

Supported by DARPA, DOE, NASA, and NSF, the initiative involves SPP vendors that include HP, IBM, and SGI/Cray. In addition, the researchers are active participants in many federally supported activities, including DARPA programs such as Quorum, the Department of Defense Modernization Program, the NSF-sponsored Partnerships in Advanced Computational Infrustructure (PACI), and the DOE's Accelerated Strategic Computing Initiative.

Through these interactions, the importance of Scalable I/O has become more widely recognized. For example, research supported under the initiative influenced the functionality incorporated in MPI-2. Many SIO participants actively contributed to the Message Passing Interface Forum. As a result of their arguments, MPI adopted parallel I/O into its scope. This led to the incorporation of MPI-IO, a mid-level, parallel I/O application program interface developed in part by SIO researchers, into the second version of the MPI specification.

Following are highlights of other recent accomplishments by the Scalable I/O as it winds up its fourth year.

Performance Evaluation

The PABLO performance evaluation system has been enhanced to collect and analyze detailed application and physical I/O performance data, with emphasis on extension to new hardware platforms and augmentation of tools to capture and process physical I/O data via a device driver instrumentation toolkit. An extensive comparative I/O characterization of parallel applications was conducted to understand the interactions among disk hardware configurations, application structure, file system APIs, and file system policies. This study showed that achieved I/O performance is strongly sensitive to small changes in access patterns or hardware configurations. Based on this study, standard I/O benchmarks are being developed to capture common I/O patterns. The original testbeds for the Scalable I/O Initiative, CACR's Intel Paragon and Argonne National Laboratory's (ANL's) IBM SP, have been augmented by the Center for Advanced Computing Research's (CACR's) HP Exemplar.CLIP: A Checkpointing Tool

Checkpointing is one of the most I/O-intensive component for large-scale applications on scalable parallel systems. A checkpoint and restart tool, called CLIP, has been developed for Fortran or C applications using either NX or MPI message-passing libraries on the Intel Paragon. It uses novel techniques such as memory exclusion to substantially reduce the amount of data to be checkpointed. Tests show that CLIP is simple to use and its performance matches application-specific tools.Distributed Frame Buffer

A multi-port frame buffer has been completed for the Intel Paragon. Pixel I/O bandwidth has been a bottleneck with the traditional HIPPI frame buffer approach to performing 3D rendering on a multi-computer. This multi-port frame buffer has the capability to do Z-buffering in hardware which enables both sort-middle and sort-last parallel rendering algorithms. Experiments, using Parallel Graphics Library (PGL) show that the four-port prototype frame buffer can deliver applications about 600 Mbytes/sec.Memory Server for the Paragon

A memory server that allows applications to use remote physical memory as the backing store of its virtual memory system has been completed and released for the Intel Paragon. Swapping a page with the memory server takes 1.4 msec compared to about 27 msec using the traditional virtual memory system. A fine-grained thread package has been developed for the memory server to schedule programs for locality. Tests with applications show that this approach is effective. A shared virtual memory system, which allows applications written for shared-memory multiprocessors to run on the Intel Paragon, was also completed. The system uses a novel coherence protocol called home-based lazy release consistency protocol. All SPLASH-2 benchmark programs have exhibited good speedups.User-level Network Services

Experiments with a user-level TCP/IP that bypasses a centralized network server demonstrated speedups of a factor of two to three for a single TCP connection and scaling with multiple connections. The structure of the Intel Paragon OS (how it divides functionality between the Unix server and emulation library) was determined to be incompatible with a complete/robust user-level TCP/IP. An implementation of decomposed TCP/IP and UDP network services was completed for the Intel Paragon. This implementation allows a client program to send or receive network packets directly, without sending the data through the Intel Paragon server. However, due to architectural deficiencies in the original single server, the current implementation of the decomposed server only runs on Intel Paragon's server nodes.SIO Low-Level API

The definition of the Scalable I/O Low-Level Parallel I/O API was completed and released for review by the community. This API is simple and yet provides enough mechanisms to build high-level, efficient APIs. A reference implementation of the Scalable I/O Low-Level API 1.0 on the Paragon has been implemented, tested, and released. A parallel file system was implemented using the Scalable I/O Low-Level API reference implementation on the Paragon. This file system uses the same API as the Intel Paragon PFS. The results of performance experiments indicated that this PFS is more efficient than the Intel Paragon PFS and demonstrated that the Scalable I/O Low-Level API is an efficient and effective API. In addition, ADIO has been implemented using the Scalable I/O Low-Level API on the Intel Paragon.ADIO & Romio

The specification of the Abstract Device for I/O (ADIO) was completed and implemented on the Intel Paragon, IBM SP, NFS, and Unix file systems. A portable implementation of Intel PFS and IBM PIOFS interfaces was completed using ADIO. A portable implementation of most features of the I/O section of the MPI-2 specification, including non-blocking and collective operations, was completed. This system, called Romio, was based on ADIO and works with any implementation of MPI, in particular, the Intel Paragon, IBM SP, SGI Origin 2000, HP Exemplar, and networks of workstations. As high-speed networks make it easier to use distributed resources, it is increasingly common that applications and their data are not colocated, causing manual staging of data to and from remote computers. An initial implementation of RIO, a prototype Remote I/O library based on ADIO, was completed. Rather than introducing a new remote I/O paradigm, RIO allows programs to use the MPI-IO parallel I/O interface to access remote file systems and use the Nexus communication library to interface to configuration and security mechanisms provided by Globus. An application study has shown that RIO provides an alternative to staging techniques and can provide superior performance.I/O Extensions to dHPF

A first implementation of end-to-end out-of-core arrays in the dHPF research compiler was completed. The current version works only for small kernel programs, but will be enhanced to accept more complex programs over the next year. The compiler produces calls to the PASSION I/O library to move the data in and out of memory as needed. PASSION has been implemented on the Intel Paragon and the IBM SP and was used to implement a Hartree-Fock code, in which the I/O time was reduced to as little as 10 percent of the original I/O time on the Paragon. In another application at Sandia National Laboratory, users were able to obtain application level bandwidth of 110 Mbytes/sec out of a maximum possible of 180 Mbytes/sec on three I/O nodes of the ASCI Red System.Active Data Repository

The development of a framework and a first prototype of the Active Data Repository were completed. The Active Data Repository framework, which is designed to support the optimized storage, retrieval, and processing of sensor and simulation datasets, supports various types of processing, including data compositing and projection and interpolation operations. This framework was used to prototype a sensor data metacomputing application in which a parallelized vegetation classification algorithm, executing on an ATM-connected Digital Alpha workstation network, used the MetaChaos metacomputing library to access the IBM SP-based Active Data Repository prototype.Future Directions

Through the end of this year, the Scalable I/O Initiative will emphasize vertical integration of the different software technologies described above (e.g., Scalable I/O Low-Level Parallel I/O API, Romio implementation of MPI-2 I/O functionality, RIO for remote file access, out-of-core arrays in dHPF, PASSION, and the Active Data Repository) to investigate I/O scalability in both local- and wide-area environments. Just as the Scalable I/O Initiative built upon Caltech's NSF Grand Challenge Application Group, ``Parallel I/O Methodology for I/O-Intensive Grand Challenge Applications," the Scalable I/O Initiative is the basis of new activities in ASCI Strategic Alliances and ASCI Centers of Excellence.For more information, see http://www.cacr.caltech.edu/SIO/.